The preparation of future healthcare providers requires robust, reliable methods to evaluate the efficacy of various clinical education modalities. Since the early 2000s, simulation has dramatically altered the landscape of nursing education, prompting researchers to seek instruments that could reliably measure how well different environments meet the learning needs of students. The development and subsequent refinement of the Clinical Learning Environment Comparison Survey (CLECS) represent a crucial effort in this domain, culminating in the newly launched CLECS 3.0. This advanced iteration significantly expands upon CLECS 2.0 to address reliability and offers greater flexibility for contemporary research. This HealthySimulation.com article by Dr. Teresa Gore provides an overview of CLECS 3.0, which is part of the Evaluating Healthcare Simulation Tools made available by Leighton et al.

The Foundation: CLECS and the Pandemic Pivot to CLECS 2.0

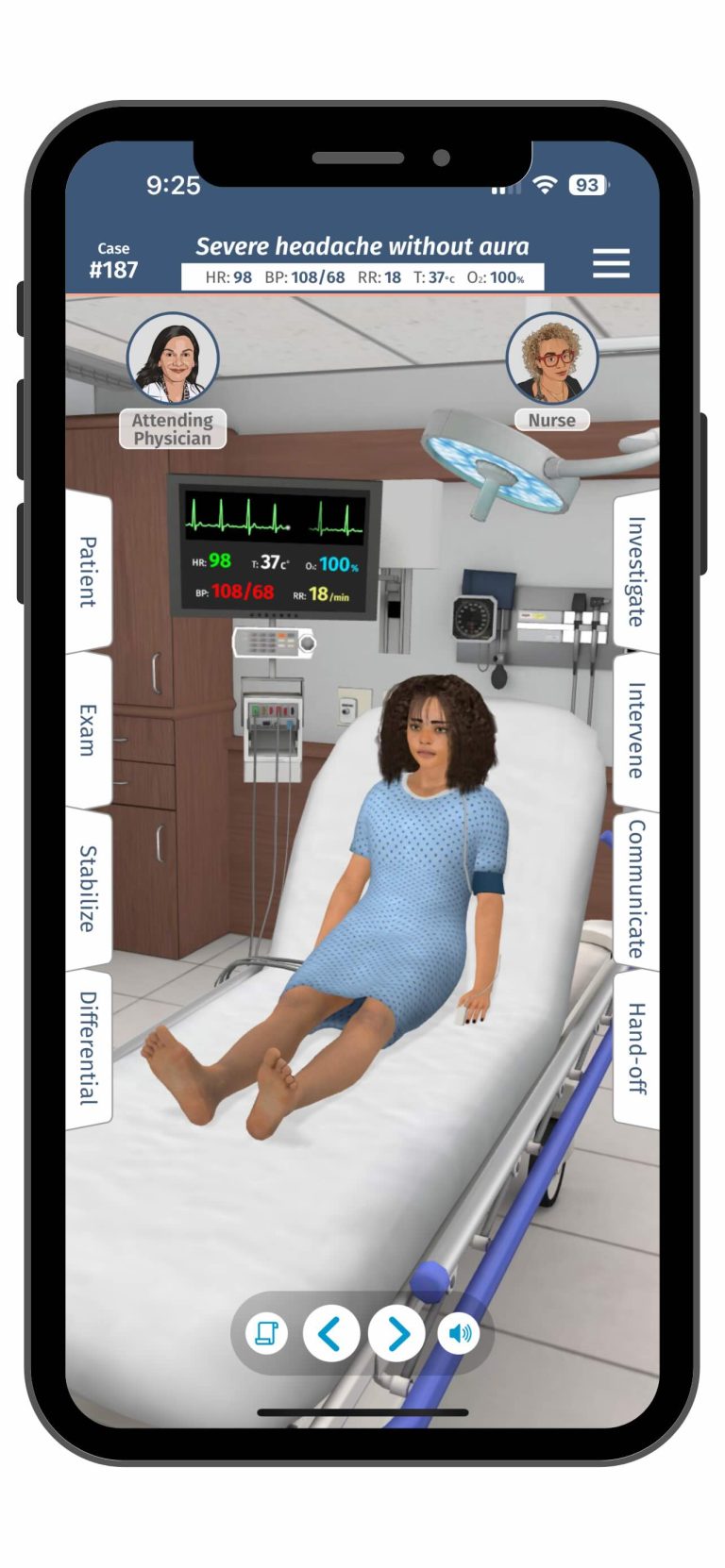

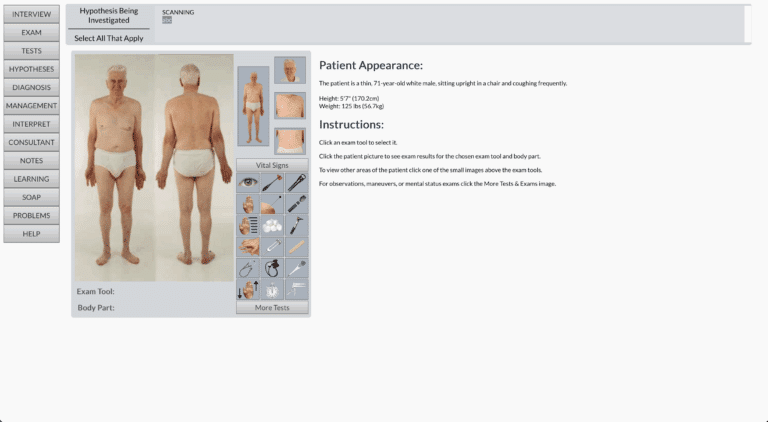

The original CLECS was established in 2007 and gained prominence when the tool was used in the National Council of State Boards of Nursing (NCSBN) National Simulation Study, which explored the extent to which simulation could serve as a replacement for traditional clinical hours. However, the global upheaval caused by the COVID-19 pandemic necessitated a rapid expansion of the instrument’s focus. When the pandemic forced nursing educators to replace usual clinical teaching methods, many relied on screen-based simulation (SBS) to provide safe, clinically-based learning opportunities and ensure students could meet regulatory requirements for progression and graduation. SBS involved students providing care for graphical representations of patients on a computer screen.

This shift catalyzed the modification to the Clinical Learning Environment Comparison Survey 2.0 (CLECS 2.0), which adapted the original instrument to compare three distinct learning environments: traditional clinical, face-to-face simulation, and the newly critical screen-based simulation (SBS). The core research question guiding the initial use of CLECS 2.0 was determining how well students perceived their learning needs were met across these three modalities.

The quantitative descriptive study conducted during the first half of 2020 utilized snowball sampling, which relied on faculty and simulation sites to make the survey available after campus closures, and gathered 113 complete responses from students across the United States, Japan, and Canada.

The Challenge of Subscales in CLECS 2.0

The CLECS 2.0 study revealed crucial data about the emerging role of SBS and highlighted significant differences across the three clinical settings. The items in the CLECS 2.0 were initially grouped into six subscales, the same as the original CLECS, reflecting core learning areas in nursing:

- Communication

- Nursing Process

- Holism

- Critical Thinking

- Self-Efficacy

- Teaching Learning Dyad

The findings revealed that students perceived that learning needs were consistently better met in traditional clinical settings than in either face-to-face simulation or screen-based simulation. Specifically, SBS scored lowest across every item on the CLECS 2.0. The preliminary analysis based on the six subscales suggested several reasons for these low scores. For instance, low communication scores in SBS were attributed to a lack of real-time conversations and interactions from the absence of robust artificial intelligence. The complexity of the nursing process, a dynamic and unpredictable problem-solving sequence, was difficult to replicate naturally in SBS. Similarly, the asynchronous nature of many SBS programs often hindered the teaching-learning dyad and self-efficacy, as students lacked immediate feedback from instructors and peers that is common in traditional clinical and face-to-face simulation environments, such as post-conferences or debriefing. The holism scores were low but could be easily elevated with the addition of these aspects into the software design for SBS.

Despite this insightful discussion based on the grouping of items, the study suffered from a small sample size, precluding the researchers from conducting the necessary reliability analysis or confirmatory factor analysis (CFA) for the CLECS 2.0. This meant that while the subscales were used for discussion, their internal consistency and statistical validity within the context of the three-environment comparison remained unconfirmed. The inability to generalize the findings further emphasized the need for statistical validation.

View the HealthySimulation.com Webinar Expanding CLECS 2.0: Clinical Learning Environment Comparison Survey Evaluation Tool to learn more!

The Expansion: CLECS 3.0 and Data-Driven Reliability

The leap from CLECS 2.0 to CLECS 3.0 represents a crucial methodological expansion, driven by the acquisition of high-quality data. The transition was made possible because the National Council of State Boards of Nursing (NCSBN) conducted another study using the CLECS 2.0. Due to the NCSBN’s ability to secure a large enough sample size, they were able to share the data that enabled the development team to perform the comprehensive reliability assessment that was missing in the initial CLECS 2.0 analysis.

This assessment revealed that the factor structure, or how the survey items group together conceptually, was not consistent across all three learning environments. Specifically, the data showed that each environment had a different number of subscales. Based on this reliability assessment, the researchers aligned the new structure of the CLECS 3.0 with the results derived from the face-to-face simulation analysis.

The most significant change in the CLECS 3.0 is the refinement and reduction of the factor structure: the six original subscales were streamlined into just two new subscales. An important note is that none of the items themselves have changed; they have merely been rearranged based on empirical evidence of how they cluster statistically.

The two validated subscales of the CLECS 3.0 are:

- Clinical Learning Development (23 items):** This is the significantly larger component of the tool.

- Individualized Patient Focus (5 items):** This is the smaller component.

This revision provides researchers with an instrument that produces more valid and reliable data for comparing perceptions of learning across modalities than the structure used in CLECS 2.0.

View the new HealthySimulation.com Community Simulation Research Group to discuss this topic with your Global Healthcare Simulation peers!

Enhanced Utility and Accessibility of CLECS 3.0

Beyond the statistical refinement, CLECS 3.0 introduces practical enhancements that boost the tool’s utility in scholarly and clinical practice. Unlike the predecessors, which were preset to compare specific environments, the CLECS 3.0 features an empty section where the user can choose which clinical learning environments they wish to compare. This flexibility allows educators and researchers to compare any combination of learning environments, such as face-to-face simulation, virtual reality, and traditional clinical. The researchers also believe that the items apply to any healthcare professional education, not just nursing.

The transition from CLECS 2.0 to CLECS 3.0 exemplifies how scholarly instruments are refined through rigorous, data-driven methodology. By successfully achieving reliability assessment through a larger dataset, the developers have provided the field of health professions education with a validated, flexible, two-subscale tool ready to precisely measure how different learning environments meet student needs in the ever-evolving landscape of clinical training.

Furthermore, the CLECS 3.0 tool is readily available under a Creative Commons license. This means that permission is not required to use the tool, whether for daily educational work or for formal research, fostering its widespread adoption within the academic community.

Access the CLECS 3.0 and Evaluating Healthcare Simulation Tools!